EPFL — PhD Research · 2017 – 2021

Personalized Telerobotics via Fast Machine Learning

IEEE RA-L 2019 · 32 citations

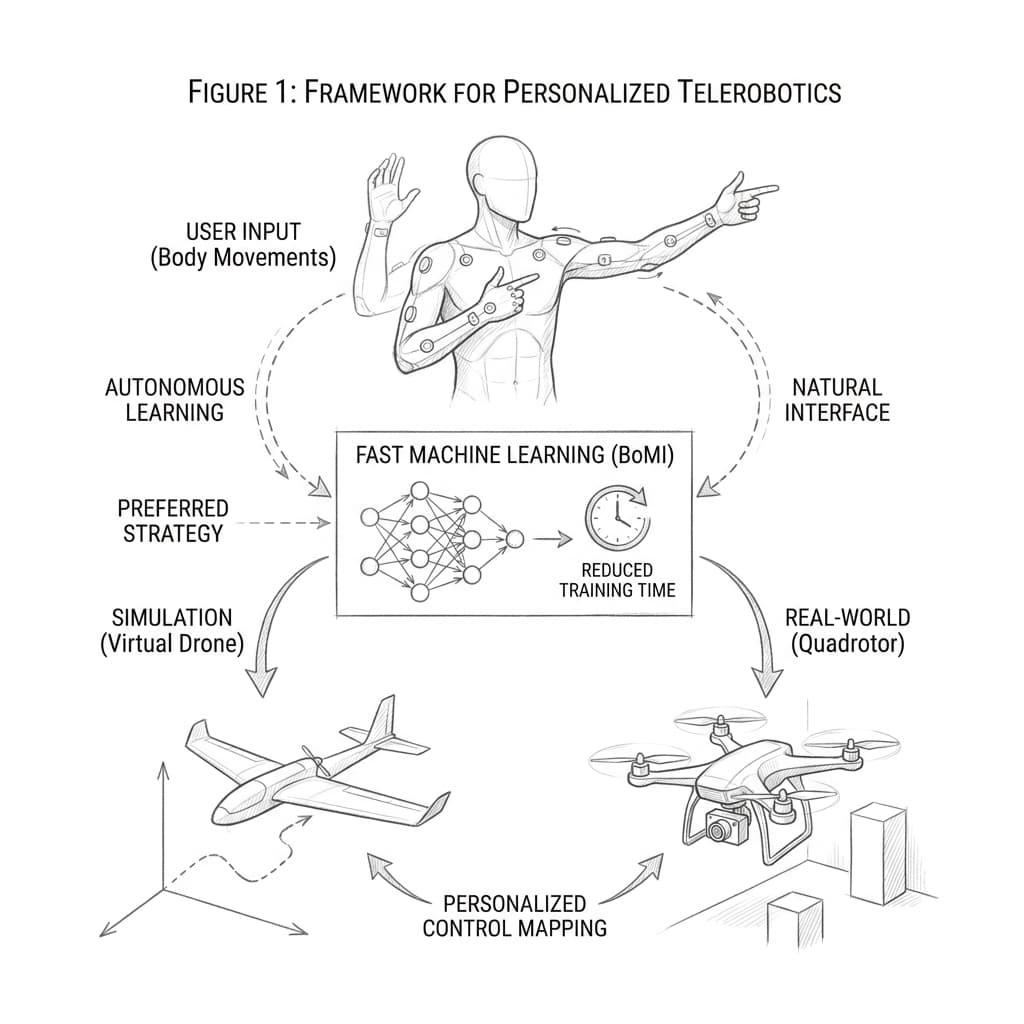

Teaching a drone to respond to your body takes time — and that time varies dramatically between people. Traditional body-machine interfaces use a one-size-fits-all mapping from motion to command, ignoring how differently individuals naturally move.

This paper introduced a fast machine learning approach that builds a personalized interface for each user in just a few minutes. Rather than prescribing which body segments to use or how to move them, the system observes a short session of spontaneous motion and learns a compact, user-specific mapping aligned with the individual's natural movement repertoire.

Validated in a drone teleoperation task across a diverse group of participants, personalized interfaces consistently outperformed generic ones in both task performance and user comfort — with calibration taking minutes rather than hours. Published in IEEE Robotics and Automation Letters, cited over 30 times.